Is this blog we take a look at what Deep Learning and Inference are and how they complement one another in AI workflows.

Deep learning is primarily concerned with model creation and training. It entails developing deep neural networks capable of learning and identifying complicated patterns in vast datasets. During the learning phase, the model is ‘trained’ how to interpret data, make predictions, or classify information based on examples provided to it. Deep learning’s strength is its capacity to interpret and learn from large volumes of unstructured data, such as photos, text, and audio, making it a versatile tool for a variety of applications.

AI inference is used after a deep learning model has been trained. Inference is the process of using the trained model to generate predictions or judgments based on previously unobserved data. It is about applying the patterns and insights learnt to real-world settings. During the inference stage, for example, a deep learning model trained to recognize speech patterns is used to transcribe and understand spoken words in real-time.

Here’s a simple analogy

Consider a student (the AI model) attending school (the training process) and learning how to solve math problems (analysing data).

The student practises a lot, makes mistakes, and gradually learns how to solve different types of math problems correctly (the AI model is trained on a large dataset, learning to recognise patterns and make predictions).

Now that this student is out of school, he or she is faced with a real-life situation, such as going shopping and calculating the total cost (applying what they’ve learned to new situations).

This student quickly calculates the total cost (the AI model uses its training to make decisions or predictions about new, unknown data).

Learning

Deep learning is all about learning and improving. It’s the phase where the AI is still a student, learning from examples and getting better.

Doing

Inference is about doing. The AI is no longer a student; it’s using what it learned to solve problems it hasn’t seen before.

While deep learning involves both learning from data and applying this during the training phase, AI inference is solely concerned with applying the learned model to new situations, much like using learned skills in real-world applications.

Lets take a closer look at what AI Deep Learning and Inference are and how they compliment each other.

What is AI Deep Learning

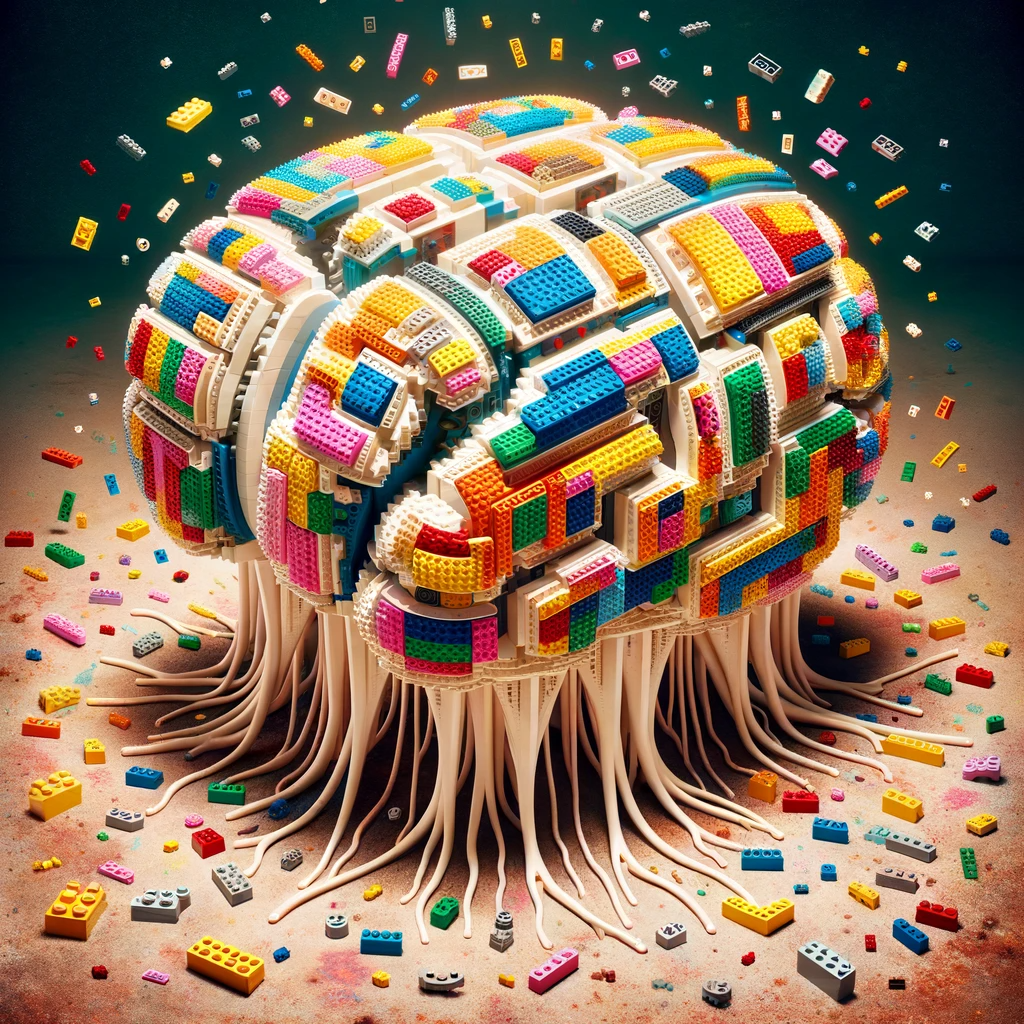

Deep learning is a subset of machine learning, an area of AI that focuses on enabling machines to learn from large sets of data. What sets deep learning apart is its ability to learn and make decisions by mimicking the workings of the human brain. At the heart of deep learning are neural networks, particularly deep neural networks, which are inspired by the structure and function of the human brain.

A deep neural network consists of layers of interconnected nodes, or ‘neurons’, each layer designed to perform specific tasks. The ‘deep’ in deep learning refers to the number of these layers; the more layers, the deeper the network. These layers are capable of identifying intricate patterns and features in large datasets, a task that is challenging for traditional machine learning algorithms.

The power of deep learning lies in its ability to handle vast amounts of unstructured data such as images, text, and sound. For instance, in image recognition, deep learning models can learn to identify and classify objects in images with remarkable accuracy, a task that was nearly impossible for machines a few decades ago. Similarly, in natural language processing, deep learning models have been instrumental in achieving breakthroughs in machine translation, sentiment analysis, and conversational AI.

Training deep learning models requires large datasets and significant computational power. The training process involves feeding data through the network and adjusting the network parameters (weights and biases) until the model can accurately recognise patterns and make predictions. This process is known as backpropagation and is central to the training of deep neural networks.

One of the most exciting aspects of deep learning is its self-learning capability. Given enough data, deep learning models can improve their accuracy and efficiency over time, without human intervention. This aspect is crucial in applications like autonomous vehicles, where the ability to learn and adapt to new environments is key.

The requirement for large datasets and high computational power makes it resource-intensive. There’s also the ‘black box’ problem, where the decision-making process of deep neural networks is not always transparent, making it difficult to understand how certain decisions or predictions are made. You can think about this in terms of what datasets the model was trained on which could bias the output.

What is AI Inference

At its core, AI inference refers to the process by which a trained AI model makes predictions or decisions based on new, unseen data. This is the stage where the practical application of AI comes into play, distinct from the training phase where the model learns from a dataset. Think of it as the difference between a student learning in a classroom (training) and then taking an exam in an unfamiliar setting (inference).

To understand AI inference, it’s crucial to first grasp the concept of an AI model. These models are essentially sophisticated algorithms that, through a process known as machine learning, have been ‘trained’ on large datasets. This training involves adjusting the model’s parameters so that it can accurately recognise patterns and make predictions. Once trained, the model is ready for inference.

The inference process is where the true power of AI is unleashed. When new data is introduced to a trained model, the model applies its learned patterns to this data to make predictions or decisions. This could range from identifying objects in images, understanding spoken words, predicting market trends, to diagnosing diseases from medical images. The applications are as diverse as the fields AI touches.

One of the key aspects of AI inference is its need for speed and efficiency. In many applications, such as autonomous vehicles or real-time language translation, decisions need to be made in a fraction of a second. This requirement has spurred significant advancements in both software and hardware designed specifically for AI inference tasks. Specialized processors like GPUs (Graphics Processing Units) and TPUs (Tensor Processing Units) are increasingly used to accelerate inference tasks.

The accuracy of inference depends heavily on the quality and breadth of the training data. A model trained on limited or biased data may perform poorly when exposed to real-world, diverse datasets. This is known as the problem of generalisation in AI. There is a constant trade-off between the complexity of the model and the speed of inference. More complex models, while potentially more accurate, require more computational power and time to process data.

Additional Information

Deep Neural Networks

Lets take a moment to discuss what Deep Neural Networks are.

Imagine you have a big box of LEGOs, and you want to build a model of something, like a house or a car. In AI Deep Learning, a deep neural network is like a very advanced LEGO model. It’s made up of lots of small pieces (called neurons) that are connected together in layers.

Just like how you would follow instructions to build your LEGO model, the deep neural network gets instructions from the data it sees. If you’re building a LEGO car, you start with the base, add wheels, then the body, and so on. In a deep neural network, each layer of neurons is like a step in the building process. The first layer might learn simple things, like lines and edges in a picture. The next layer learns to combine these lines into shapes, like squares or circles. As you go deeper into the network, the layers learn more and more complex things.

When you show a picture to this network, it starts at the first layer, and each layer tries to understand a little bit more about the picture. By the time the picture has gone through all the layers, the network has learned a lot about it, like if it’s a picture of a cat, a dog, or a car.

The network learns by looking at lots of pictures and being told what they are. At first, it might make mistakes, like thinking a dog is a cat. But just like you get better at building with LEGOs the more you do it, the network gets better at understanding pictures the more it sees. This learning process is what makes deep neural networks a powerful tool in AI Deep Learning.